On Twitter, I’ve had reason to make some graphs to get a feel for data I’ve seen online. I like graphs, so I’m going to send them on this Substack too.

Effects of Hypothetical Pork Ban on Animal Welfare

At the end of this year, California Proposition 12 from 2018 comes into force, requiring pork sold in California to come from farms in which breeding pigs are in pens of at least 24 square feet. Only 4% of pig farmers currently comply with this requirement, and they say it will be difficult to comply in time for the end of the year (if they find it profitable to do at all), which leads to the possibility that soon pork will be hard to get hold of in California.

Veganism win?

One consideration is that removing pork completely from California will cause people to eat more other other types of meat, such as from chickens which generally have worse welfare than pigs. Kyle Bogosian1 has made a spreadsheet that takes into account these elasticity effects and estimates the amount of animal suffering averted by consuming less meat.

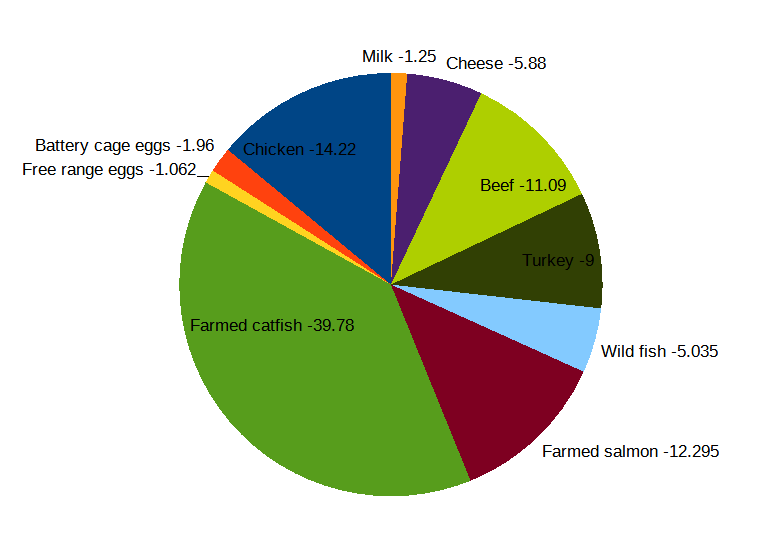

In the case of a reduction of 1 kg of pork consumption imposed externally, the products bought to replace that have a welfare impact that looks like this (measured in welfare points where -100 is one human with all their needs unmet for one day)2:

This sums to -102 welfare points, which is a greater loss than the gain of +86 welfare points caused by eating 1 kg less pork - given the uncertainty in all the guesses, I would summarize this as an external restriction on pork being morally neutral. 50% of this is a small amount of extra small fish being eaten.

Probably Californians will eventually be able to get some pork, even if it ends up naturally being more expensive because the cheapest, lowest-welfare options are unavailable. But interesting to think about. And steer clear of pescatarianism!

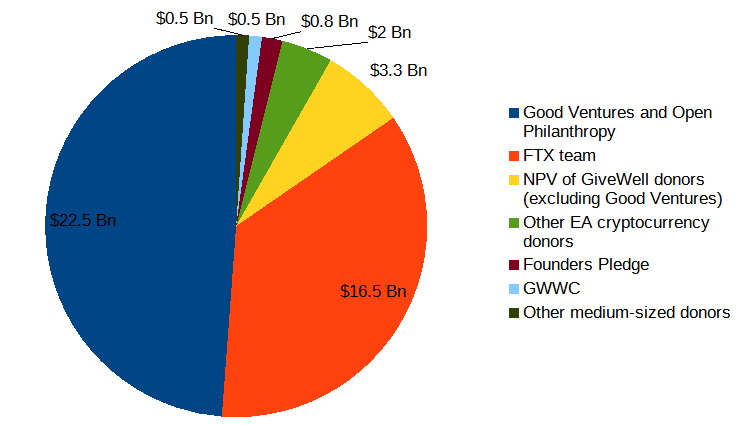

The Size of the EA Community

My rough guess for the size of the EA community was that it was about a third just Cari Tuna and Dustin Moskovitz, a third just Sam Bankman-Fried, and a third everyone else (up from half Open Philanthropy, half everyone else, following the rise of SBF). Benjamin Todd’s post on the matter with some up-to-date estimates of these amounts gave me a chance to visualise it better; actually, it’s half Open Philanthropy, a third Sam Bankman-Fried, and one-sixth everyone else.

My big update on this is that, for normal people, EA meta looks much better. Donating $10,000 a year forever (=$250,000 NPV), like an upper middle-class person taking a 10% GWWC pledge might, is as good as getting a new Sam Bankman-Fried with 0.0015% probability. 80,000 Hours, with a mere $10 million all-time budget, has caused 1 Sam Bankman-Fried to join the EA community (probably a lot more than 80,000 hours contributed in a full credit assignment; still, it’s pretty good going). The action I’m taking is that I’ve switched my planned donations from the long-term future fund to the donor lottery, which will make it worth it for me to think much harder about how to use my money to get these enormous amounts of leverage.

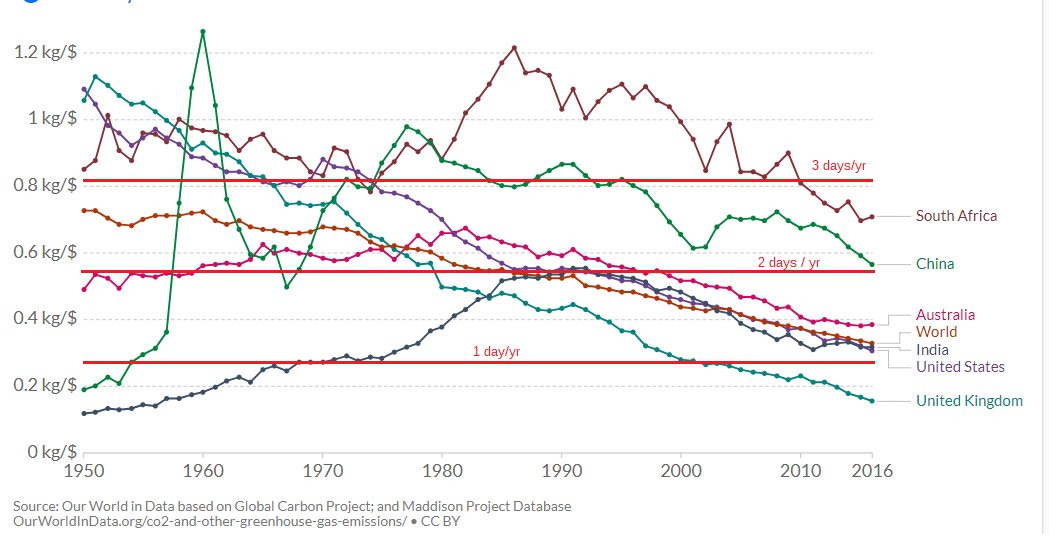

Time Cost of Emissions Reduction

Carbon intensity is the total amount of CO2 emissions of an economy divided by the total GDP of the economy; it represents the amount of carbon dioxide emitted for an economy to product $1 worth of stuff. The goal is to get this number to be small.

Since ($ to reduce emissions/kg CO2 averted) * (kg CO2 emitted/$ produced) = % of money spent on offsetting all emissions = % of time spend on offsetting all emissions, at a constant cost of carbon emissions reduction, the carbon intensity is proportional to the number of days of full-time effort per year one should dedicate (through e.g. donations to effective climate charities).

The amount of CO2 produced does scale approximately with income, so this small percentage of total income that applies for everyone might be an interesting anchoring point to focus some kind of marketing campaign around - I’ve proposed #AllInADaysWork, around the UK value of 1 day/year, which for the marginal UK participant fully averts all the carbon they would have emitted on average.3

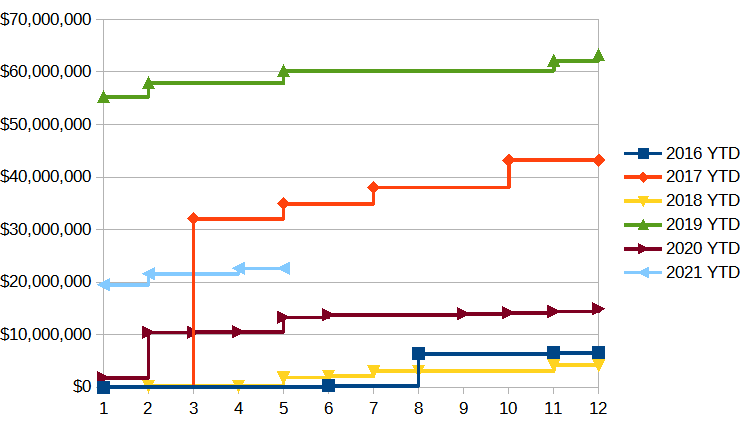

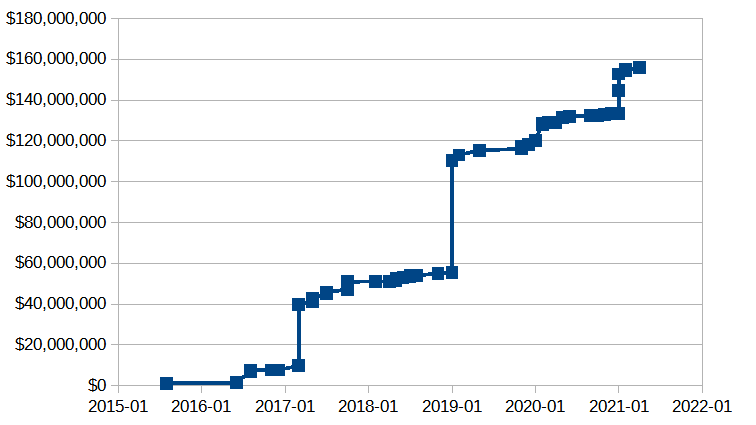

Cumulative Open Philanthropy AI Safety Grants

AI safety is very important; probably the world problem on which additional work would have the greatest direct impact. Therefore, the amount and distribution of money flowing into AI safety from Open Philanthropy (half the EA community) is also pretty important to know about. As such, I asked on Metaculus “How much will Open Philanthropy grant in their focus area of Potential Risks from Advanced Artificial Intelligence in 2021?”. As part of estimating this for myself, I looked at the distribution of grants made in past years, and plotted them as cumulative plots.

These plots are as of May 2021, though there have been no new grants up until July 2021 so they’re accurate as of the time of me writing this sentence (and inaccurate as of the time of me writing this sentence in September - curse my slow posting!).

Grants tend to be either big, like 10s of millions, or small, less than a million. The two biggest grants, which you can see as big discontinuities, are the $30M grant in 2017 to buy a board seat at OpenAI, and the $55M grant in 2019 to start CSET.

The Metaculus community estimates $30M - $53M - $99M in grants by the end of this year. From the shape of previous grants, this might be in the form of another large grant on the order of $30M, and indeed, it was - after I made this graph, Open Philanthropy made a $39M grant to CSET, renewing their support from when they incubated it in 2019.

Who also runs the excellent eapolitics.org, where he has done an in-depth evaluation of how good the 2020 presidential candidates, 2021 NYC mayoral candidates, and 2021 California gubernatorial recall candidates are.

“Catfish” and “salmon” are somewhat broader than the names imply, representing other small and large fish, respectively.

Possibly more on this in a future post?

Yo, sorry for unrelated comment but i found your shill list and I have a short story that might interest you. https://electricliterature.com/the-great-silence-by-ted-chiang/#article-main-572 you can google the great silence for a pdf for a better format but i think no one in their right minds would click a link to a pdf posted by a rando. Cheers!